The Expectation Stack: Why AI Fatigue is Actually an Architecture Problem

Moving beyond "AI fatigue" to porous enrichment systems—enterprise locked, oceanic-inspired Agentic AI models, where humans stay at the core, the control, and the center.

Executive Brief (for the busy strategist)

The Problem: “AI Fatigue” is usually a mismatch between enterprise needs and consumer-tier configurations.

The Solution: GW Agentic AI Interoperability™. We architect “porous” systems where porosity ≠ permissive: open composition demands stricter boundaries (tool gating, isolation, auditability). Zero-Trust oAuth Okta

The Core Philosophy: Living Ecosystems where ensembles beat idols. Don’t bet on one model; orchestrate by task shape, latency, privacy posture, and brand voice.

The Objective: Compounding edge weekly shipping with visible evaluation loops. Not “rip and replace,” but “uplift and govern” using Agentic AI interrogability.

The Mantra: Enrich the dataset. Route the work. Gate the tools. Evaluate the drift.

This Is Uplift, Not Rip‑and‑Replace

GlobalizeWe is about positive interconnect. Not “connect everything.” Not “burn it down.” It’s the more practical, harder thing: build what you can from what you have—in energetic, agentic AI—without letting the system outrun the governance.

Because in enterprise, speed without governance isn’t innovation. It’s a future incident report.

Your tools aren’t “wrong.” They’re just under-orchestrated. Most enterprises own powerful tools that act like islands. Real value appears when those tools become interoperable inside one governed lattice where:

Knowledge moves safely.

Actions are permissioned.

Outputs are evaluated.

Drift becomes visible.

Free tiers are incredible on‑ramps. But they’re not baselines for enterprise capability. Testing the lowest‑capability configuration and generalizing the result is like test-driving a base trim in a hailstorm and writing off the entire platform.

From the trenches: I’ve watched teams declare a model “washed” after one bad week—then recover performance without changing the model at all. The fix lived upstream: context construction, retrieval scope, tool permissions, and a missing evaluation loop. Once you’ve shipped through enough release cycles, you stop worshipping model names and start instrumenting systems.

At GlobalizeWe, we treat this gap not as a failure of intelligence, but as a design flaw in adoption. Our work is about turning that mismatch into an advantage through GW Agentic AI Interoperability™: swappable models, task-scoped memory, and evaluation loops that make progress visible.

Because here’s the inconvenient truth about AI in 2026:

Capability ≠ Commodity.

Configuration, context, and orchestration matter more than headlines.

The Reality: Ensembles Beat Idols

Rather than arguing theology over the “One True Model,” we map constraints. The list will keep shifting—GPT, Gemini, Claude, Llama, Grok, Copilot, Perplexity. Trying to pick a permanent champion is like trying to choose one ocean current and calling it the sea.

The choice isn’t binary. It’s an orchestration problem. We route work based on:

Task Shape: Reasoning vs. Retrieval vs. Code vs. Creative.

Latency Budget: Interactive speed vs. batch depth.

Privacy Posture: Cloud vs. Self-hosted vs. Hybrid.

Evaluation Signals: Does it match your brand voice and risk tolerance?

When teams say “the model got worse,” I ask a different question:

Did your system get less legible?

Because if you can’t see the stack—how context is built, what retrieval pulled, what tools were used, what the eval loop flagged—then every update feels like roulette.

Porosity as a Design Principle

We treat openness as a system choice. We call this Porosity.

Porosity (GlobalizeWe definition):

Not permissive. Not fragile. Designed to evolve.

Here’s the core shift: you don’t “adopt AI.” You architect a lattice—a living system where legacy knowledge, modern models, and existing tools become interoperable under strict control.

Porosity is less about there being more tools/techniques to learn, and more about architecture that stays coherent under change.

What porosity looks like in practice:

Porous enough to cross-pollinate components (models, vector stores, UIs) without collapse.

Enriched enough that legacy datasets stop behaving like archives and start behaving like infrastructure.

Compose: automation glue + function calling + retrieval over brand corpora so intelligence resonates across UX/UI

Evolve: ship small, measure, refactor—there is no “done,” only a living meliorism

Governed enough that every action has boundaries and an audit trail.

Porosity is not a slogan. It’s a survival trait. The world you’re building in is not stable. Model behavior drifts, costs shift, and compliance tightens. If your posture is "perfection," you will break. If your posture is adaptable control, you will win.

Sovereign Agentic AI: We Decide.

“Sovereign” doesn’t mean “we build our own LLMs from scratch.” It means: We decide.

We decide where data lives. We decide what can be retrieved. We decide who has the final say when stakes are real.

In the real world, this often means utilizing Small Language Models (SLMs) and specialized agents doing serious work. They are cheaper to run, easier to constrain, and easier to deploy under enterprise posture.

Identity is the Control Plane

This is the part too many teams skip until it hurts. We use Okta OAuth patterns prescriptively so “agentic” never becomes “ambient.”

No magical permissions.

No invisible tool sprawl.

No silent data bleed.

Just explicit, reviewable access: Least privilege. Task-scoped tokens. Gated tool execution. That’s how you keep humans at the center without slowing the org to a crawl.

The Solution: Oceanic-Inspired Systems Thinking

Our approach borrows from ocean dynamics: read the currents, sample across depths, separate signal from echo. We employ Coral Reef Nodes™—modular, swappable building blocks that compose into a brand engine.

Coral Reef Nodes™

Ingest Node: Connects CMS/DAM; normalizes inputs; handles redaction.

Enrich Node: Applies embeddings, taxonomy, and locale signals.

Orchestrate Node: Handles multi‑model routing and tool access patterns.

Evaluate Node: Runs voice alignment and cultural resonance checks.

Govern Node: Manages audit trails and deletion workflows.

Activate Node: Publishes to channels (Substack, Social, Web) with analytics back-pressure.

What we keep intentionally gated:

The scoring rubrics, routing thresholds, and evaluation checklists that make these nodes operational week-to-week. (That’s the difference between “a diagram” and “an engine.”)

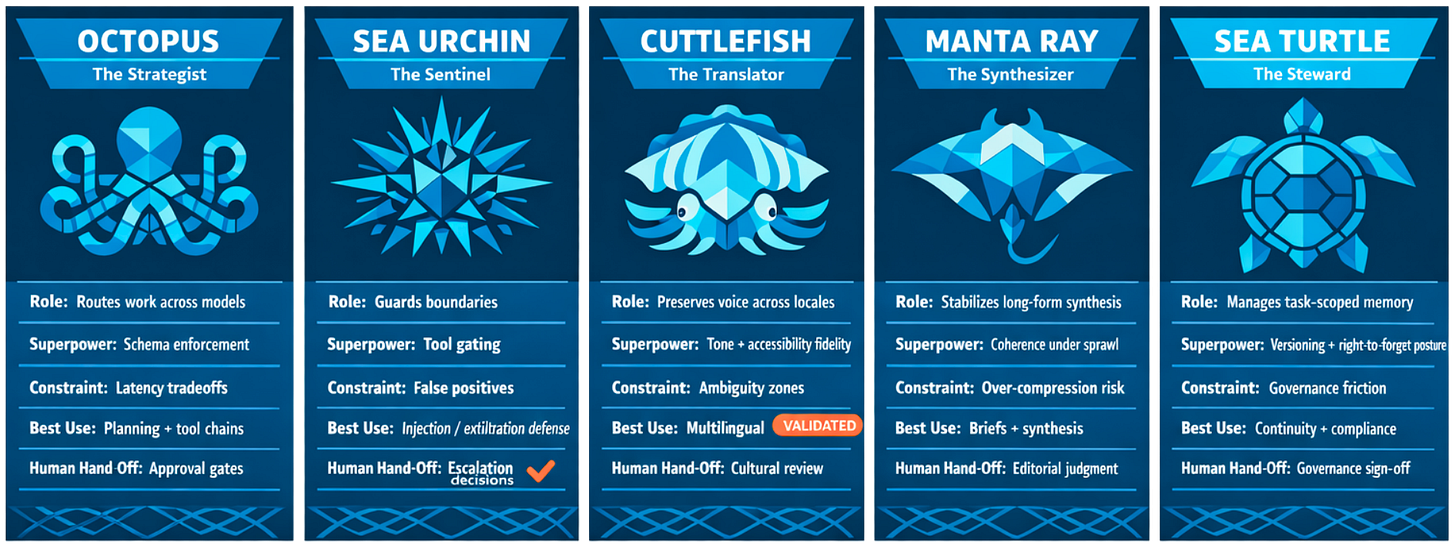

Meet the Ocean Cohort

These aren’t “bots.” They are roles designed to amplify human teams.

🐙 Octopus — The Strategist Routes across models, shapes the plan, enforces typed structure. Human hand-off: Decision gates and final intent.

🐡 SeaUrchin — The Sentinel Detects prompt injection and enforces least-privilege tool access. Human hand-off: Escalation calls.

🦑 Cuttlefish — The Translator Preserves brand voice across languages and accessibility contexts. Human hand-off: Cultural review and tone confirmation.

🦈 MantaRay — The Synthesizer Stabilizes long-form reasoning from sprawling sources without losing the thread. Human hand-off: Editorial judgment and claim verification.

🐢 SeaTurtle — The Steward Manages task-scoped memory, versioning, and right-to-forget workflows. Human hand-off: Governance sign-off.

Why roles matter:

Ensembles beat idols because work is not one shape. Publishing is not analysis. Analysis is not governance. Governance is not translation. The system should reflect that reality instead of flattening it.

Academia vs Operating Reality

We value scholarship. But preprint-to-production cycles move too slowly for teams shipping weekly.

Brands need partners tuned to release-by-release drift: how model behavior changes, how costs shift, how tool ecosystems evolve, how safety boundaries need to tighten as systems become more composable.

GlobalizeWe works inside-out (data posture, risk, governance) while mapping outside-in (model deltas, cost curves, toolchain changes). Not because we like complexity—but because pretending it isn’t there is how systems fail in public.

Security in Porous Systems

Porous ≠ permissive.

Open systems require stricter boundaries, not weaker ones. Interoperability without governance is just chaos with better marketing.

Zero-trust boundaries we insist on:

Tenant Isolation: brand-scoped retrieval with strict namespaces

Ephemeral Context: no ambient memory by default; opt-in, time-bounded caches

Vendor Portability: swappable model adapters so you’re never locked in

Tool Gating: per-task API scopes with least privilege

Auditability: traceable actions humans can review and reverse

If your system can’t explain what it did, it doesn’t get to do more.

Glossary

Expectation Stack: the assumptions people layer onto “AI” based on tier, setup, UI constraints, and context construction.

Expectation Stack Mismatch: judging the platform using a configuration that doesn’t match the intended operating conditions.

Porosity: swap-ability without collapse—open composition with strict boundaries.

GW Agentic AI Interoperability™: an orchestration approach that keeps models swappable, memory scoped, and evaluation visible.

Coral Reef Nodes™: modular building blocks that compose into a brand engine.

Ocean Cohort: role-based agentic AI models designed to augment real workflows, creative intelligence, and cultural data to augment human-at-the-core Agency 2.0 publishing

From Possibility to Actuality

Residual value is what’s left after novelty fades. Teams practicing clear context and constraints get compounding returns. Teams relying on a single prompt inside a generic chat interface experience each update like a coin flip.

Heuristics you can borrow today:

Start with the outcome, not the tool.

Treat models as interchangeable parts.

Prefer weekly shipped wins over big-bang migrations.

Instrument evaluation so drift becomes visible.

The Question I’ll Leave You With:

Where in your stack have you hardened a belief about “the right tool” into dogma—when what you actually need is a lattice that survives the next release cycle?

Where is your organization most “under-orchestrated” right now: legacy datasets, tool sprawl, identity, or evaluation?

Challenge yourself to visualize that lattice. Then let’s architect the agentic enrichment layers.

Consult Manta: Your Vision. Our Modular Intelligence. Let’s Make it Resonant.

Ex Possibilitate Actualitas